Recently, the U.S. revealed that it has been using Palantir’s Maven Smart System in the conflict against Iran.

The Maven is an AI system that can process data from around 150 different battlefield sources; and use that data to create a view of the battlespace; recommend up to 1,000 targets per hour to strike and the way to strike them; and assess the damage.

The system could revolutionize how the American military eliminates targets. However, it also creates a discussion on the dangers of military AI systems.

We’ve all made jokes about how the military’s use of AI systems could lead to a Skynet-like catastrophe, but it’s really important that we try to divorce our perceptions of AI from pop culture depictions when we’re participating in serious discourse.

One of the real dangers of AI is not that Maven – or similar systems that might emerge – is a sentient system that could choose to turn on its operator, as popular media often depicts, but rather a complex piece of software that can be overwhelmed by variables until it reacts unpredictably.

When you build AI systems to take lives in combat, and then it takes lives when you didn’t mean it to, for example, that isn’t sentience, it’s bad quality control. It’s just a piece of software that didn’t work the way it was supposed to.

And this distinction really matters, because the assertion that military AI systems are secretly maturing toward sentience and then rebellion comes with a sense of inevitability that frees us from having the hard conversations we need to about how AI is best employed in warfare. It robs the topic of all the nuance, complexity, and attention it deserves and boils it down into a meme.

The greatest threat that AI poses, however, is falling in the hands of our enemies, whether they be a country or a terrorist organization.

The real questions aren’t about whether or not we should use AI – we already are. Instead, the real questions are about how we should use AI safely and responsibly.

If we want safeguards on our AI systems, insurances they’re being used in responsible ways and that humans are always the ones making the final call when it comes to taking lives, we have to be willing to learn about and engage with what these systems actually are.

AI is already changing the face of warfare, and it’s going to continue to whether we like it or not. Developing AI warfighting capabilities is arguably the most important deterrent measure the U.S. military could be focused on today.

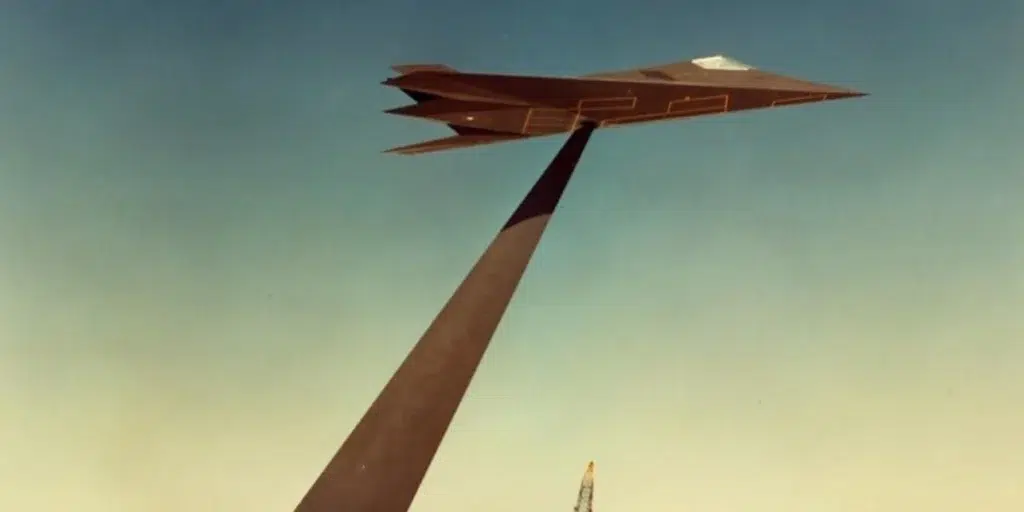

Feature Image: A soldier wears virtual reality glasses; a graphic depiction of a chess set sits in the foreground. (Illustration created by NIWC Pacific)

Read more from Sandboxx News

- Drones haven’t won the fight in Ukraine – and that matters as the West learns new ways of war

- The soldier who fought for three different armies

- These elite Marines combine tradition with special operations innovations

- Maven AI, which is revolutionizing how the US military engages targets, is already in use against Iran

- What happens when a Delta operator collects too much equipment